EleutherAI Releases GPT-NeoX-20B, A 20-billion-parameter AI Language

Por um escritor misterioso

Descrição

GPT-NeoX-20B, a 20-billion parameter natural language processing (NLP) AI model similar to GPT-3, has been publicly sourced

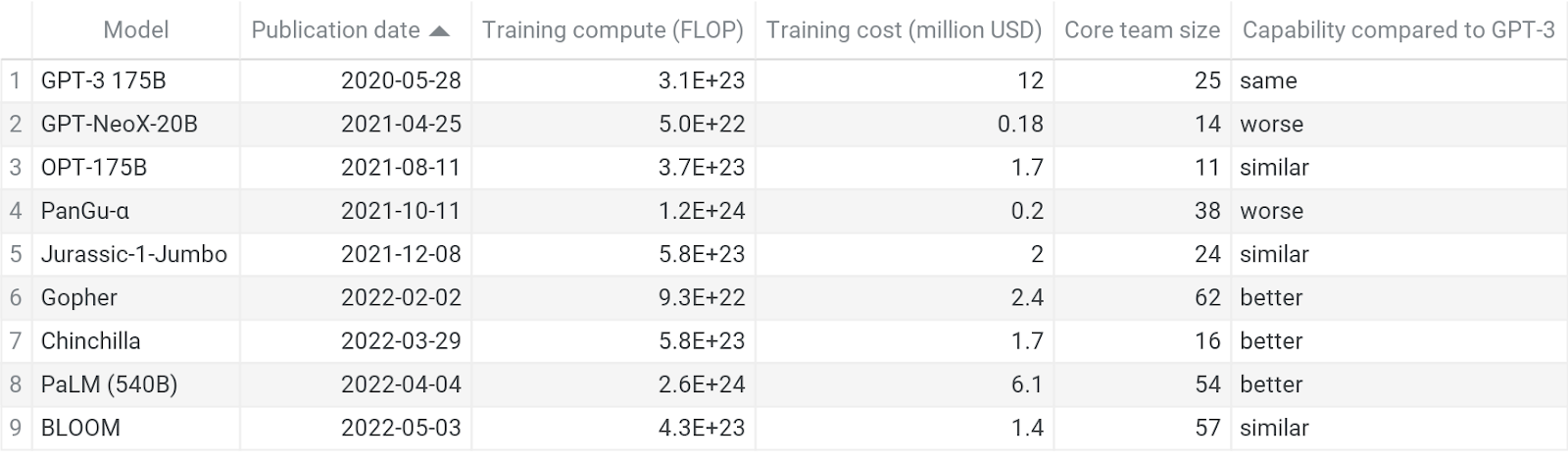

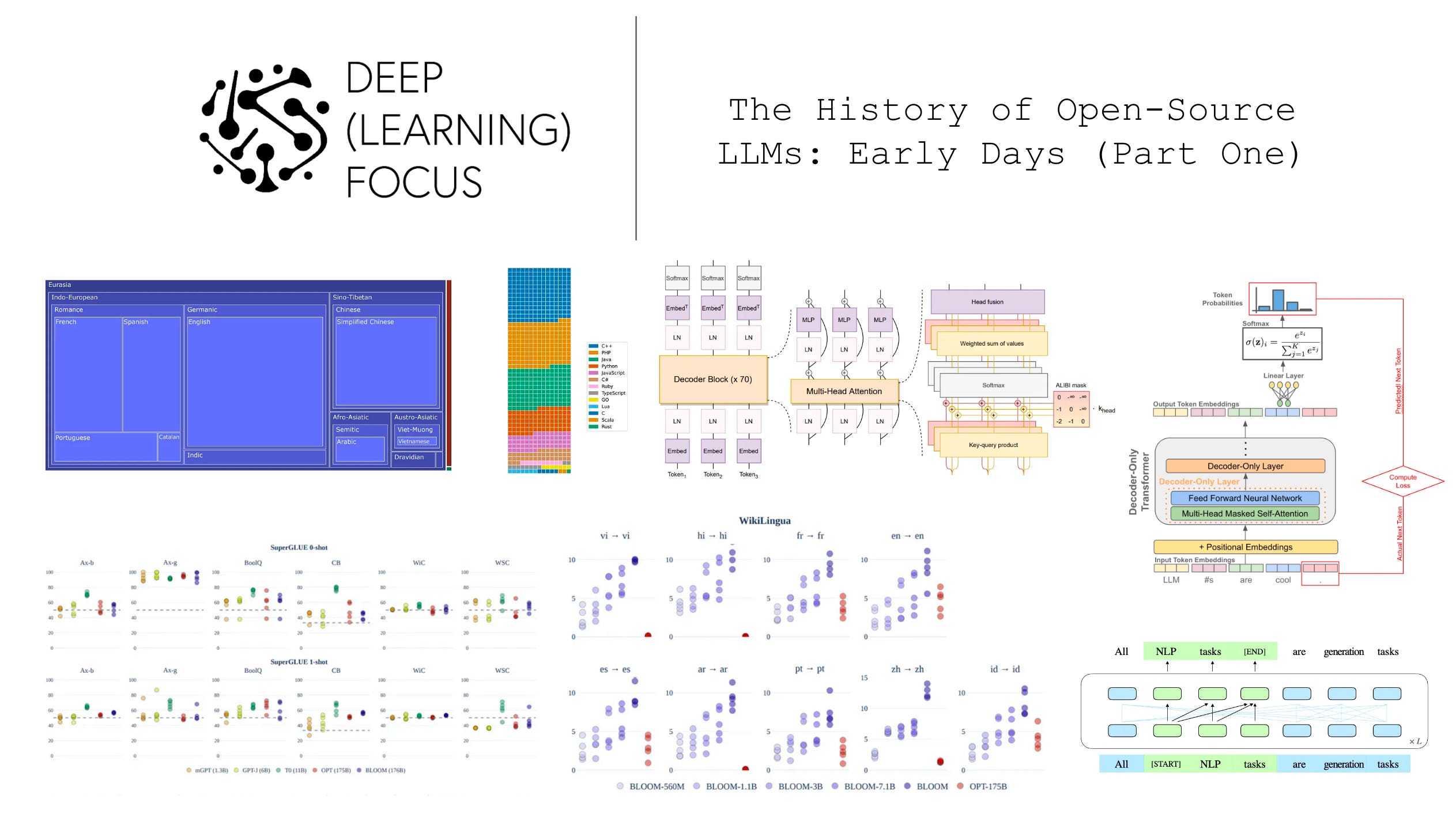

The History of Open-Source LLMs: Early Days (Part One)

GPT-NeoX: A 20 Billion Parameter NLP Model on Gradient Multi-GPU

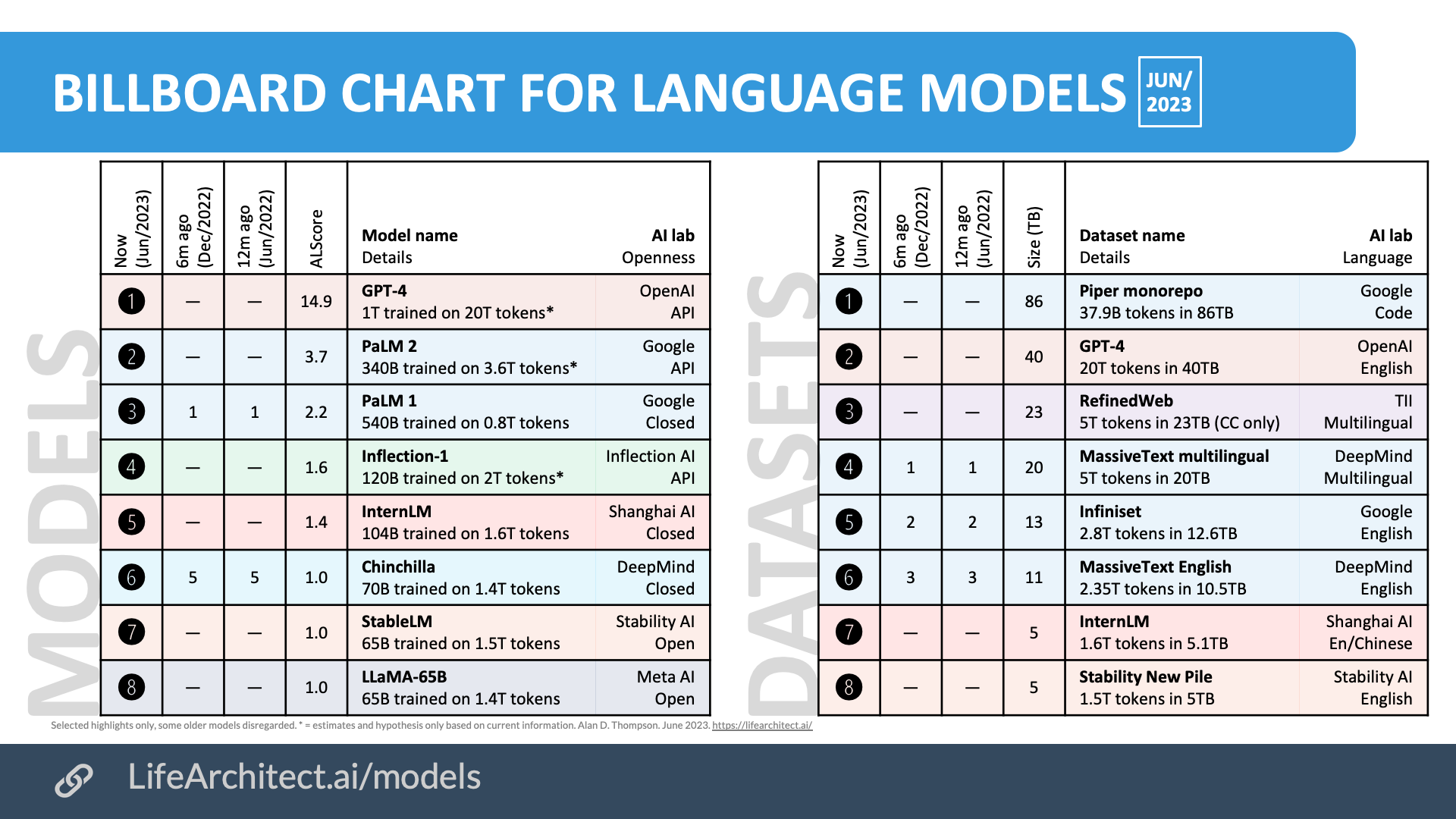

Inside language models (from GPT to Olympus) – Dr Alan D. Thompson – Life Architect

EleutherAI: When OpenAI Isn't Open Enough - IEEE Spectrum

Cerebras-GPT: A Family of Open, Compute-efficient, Large Language Models - Cerebras

The History of Open-Source LLMs: Early Days (Part One), by Cameron R. Wolfe, Ph.D., Nov, 2023

A Systematic Evaluation of Large Language Models of Code – arXiv Vanity

N] EleutherAI announces a 20 billion parameter model, GPT-NeoX-20B, with weights being publicly released next week : r/MachineLearning

CoreWeave Unlocks the Power of EleutherAI's GPT-NeoX-20B, by Max

de

por adulto (o preço varia de acordo com o tamanho do grupo)