Can You Close the Performance Gap Between GPU and CPU for Deep Learning Models? - Deci

Por um escritor misterioso

Descrição

How can we optimize CPU for deep learning models' performance? This post discusses model efficiency and the gap between GPU and CPU inference. Read on.

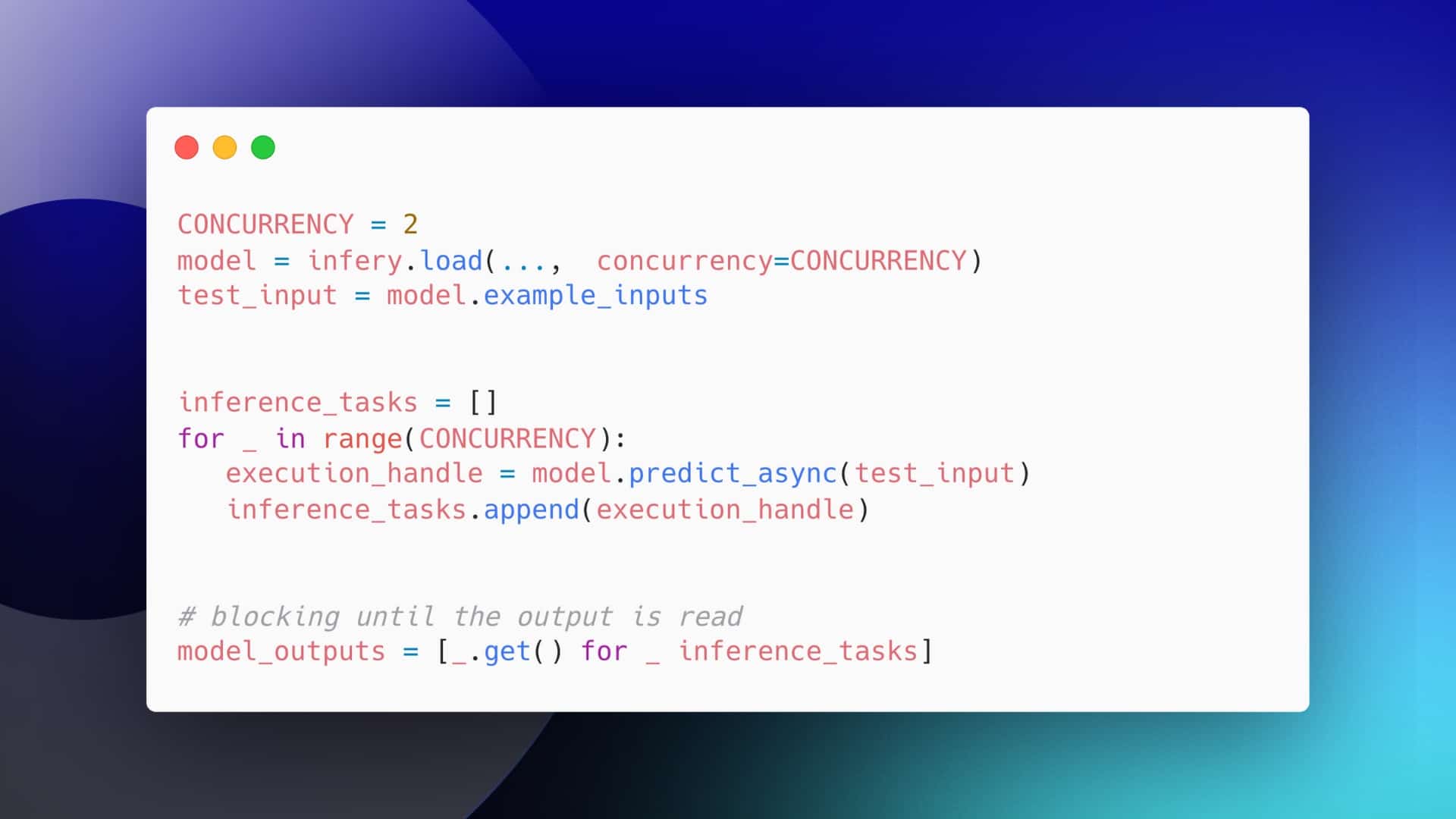

How to Maximize Throughput of Your Deep Learning Inference Pipeline

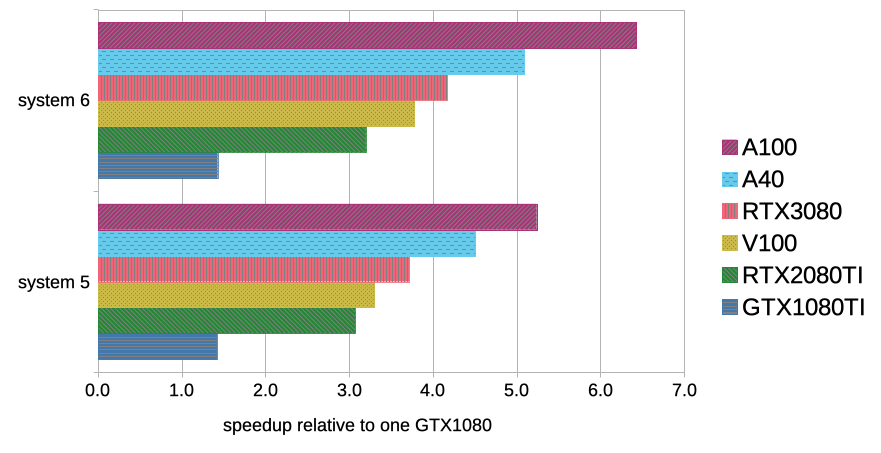

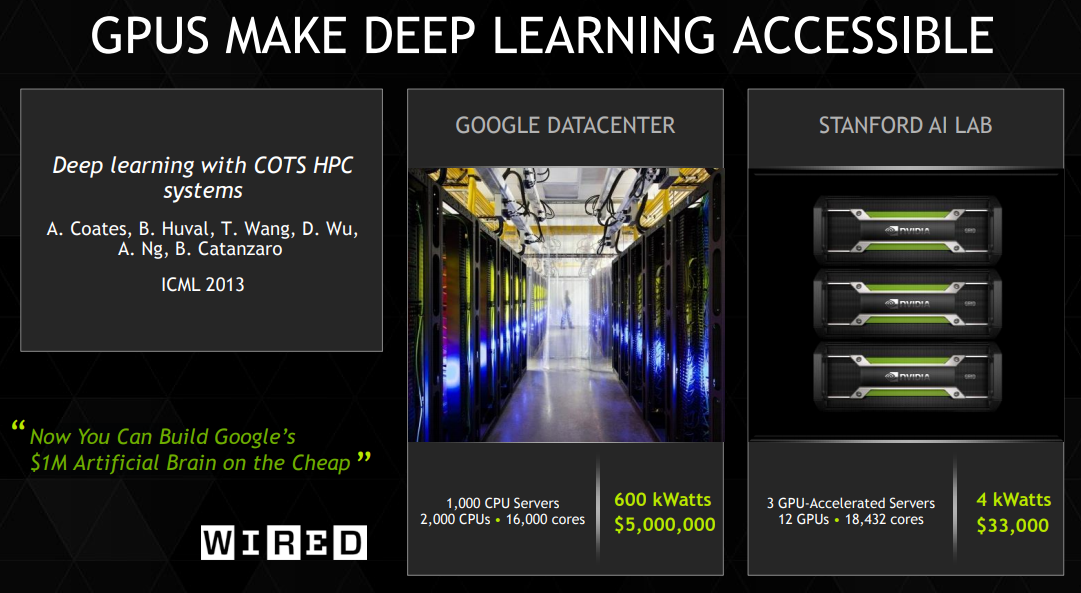

Benchmarking GPUs for Mixed Precision Training with Deep Learning

The Correct Way to Measure Inference Time of Deep Neural Networks, by Amnon Geifman

Trending stories published on Deci AI – Medium

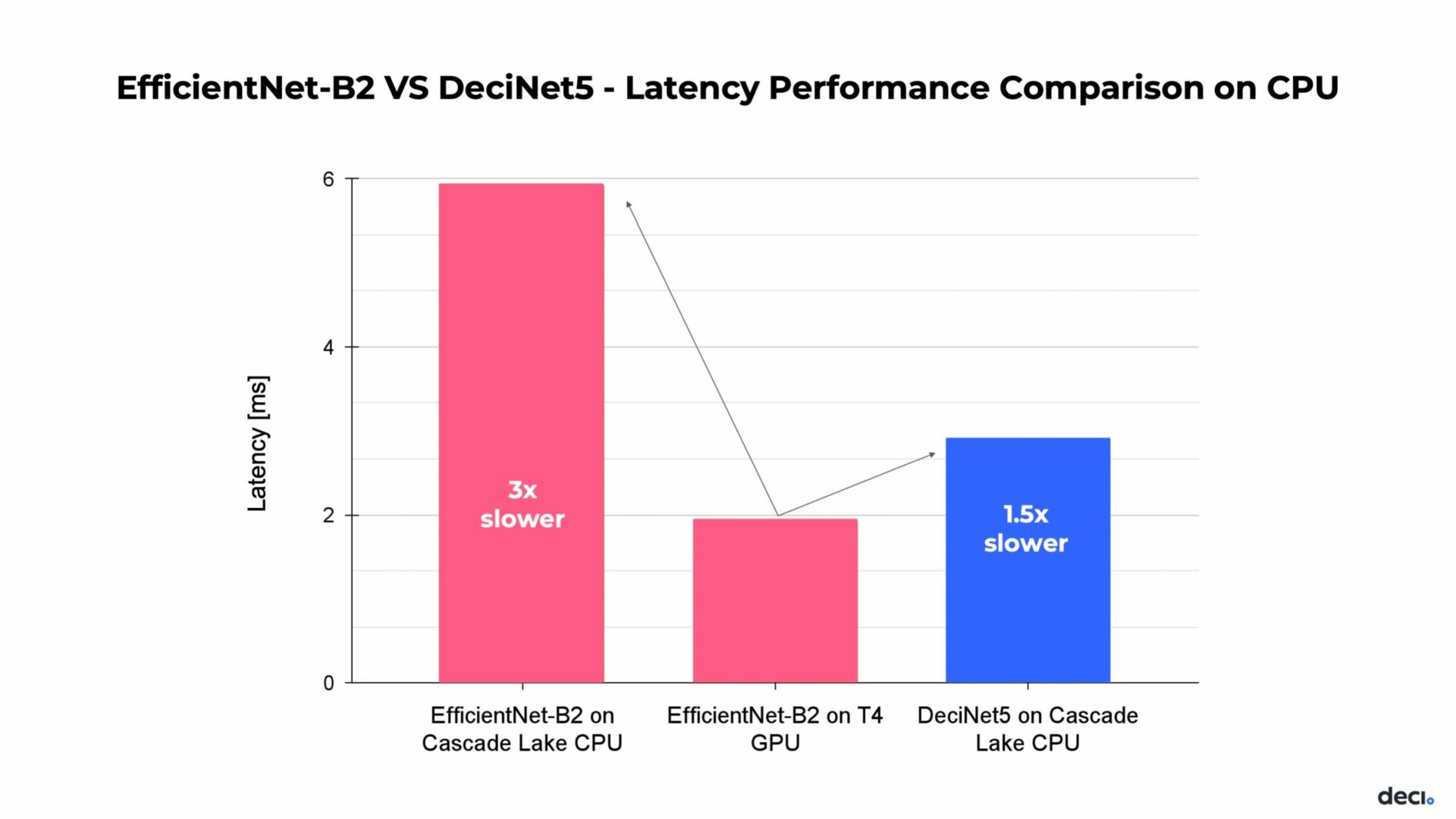

Can You Close the Performance Gap Between GPU and CPU for Deep Learning Models? - Deci

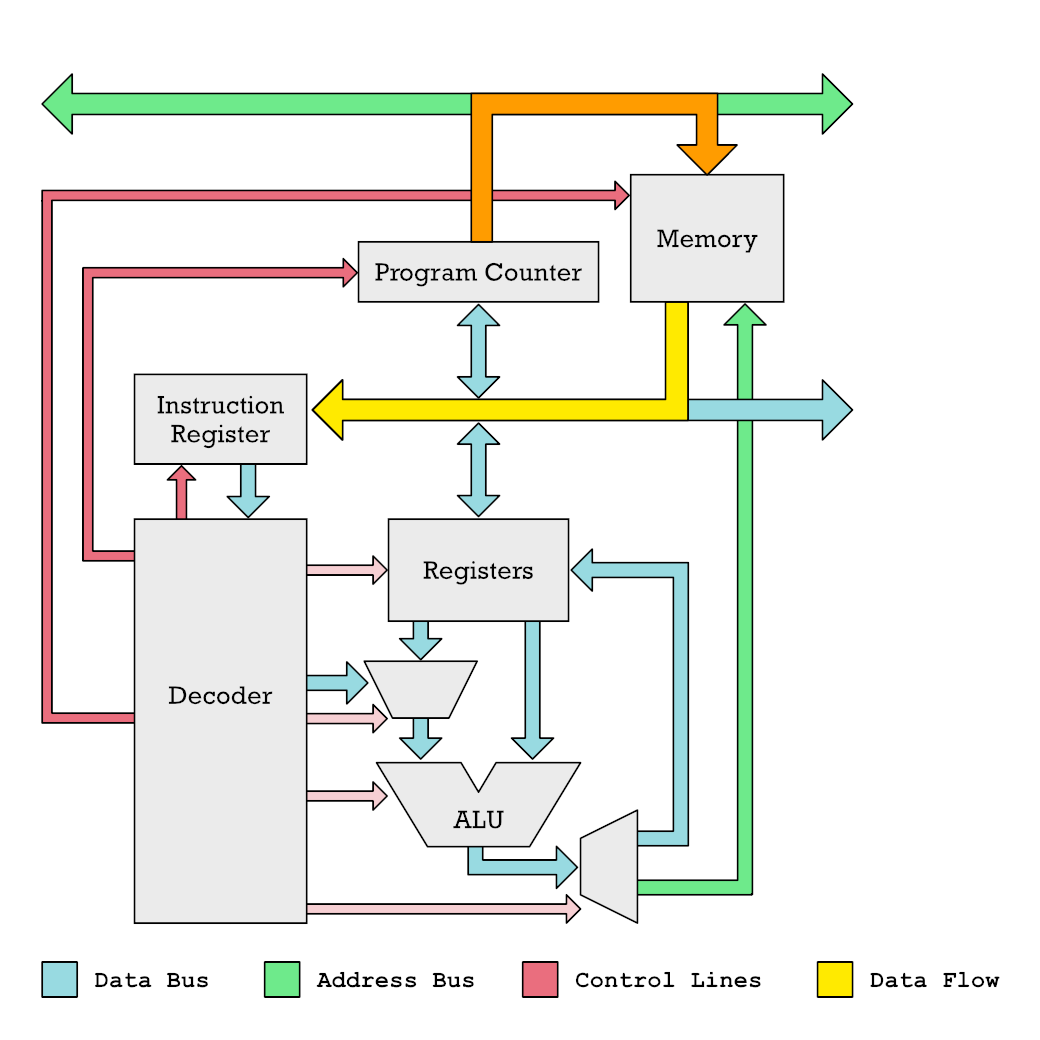

Vector Processing on CPUs and GPUs Compared, by Erik Engheim

What is GPU and CPU (differences and similarities)? - Quora

CPU vs GPU in Machine Learning Algorithms: Which is Better?

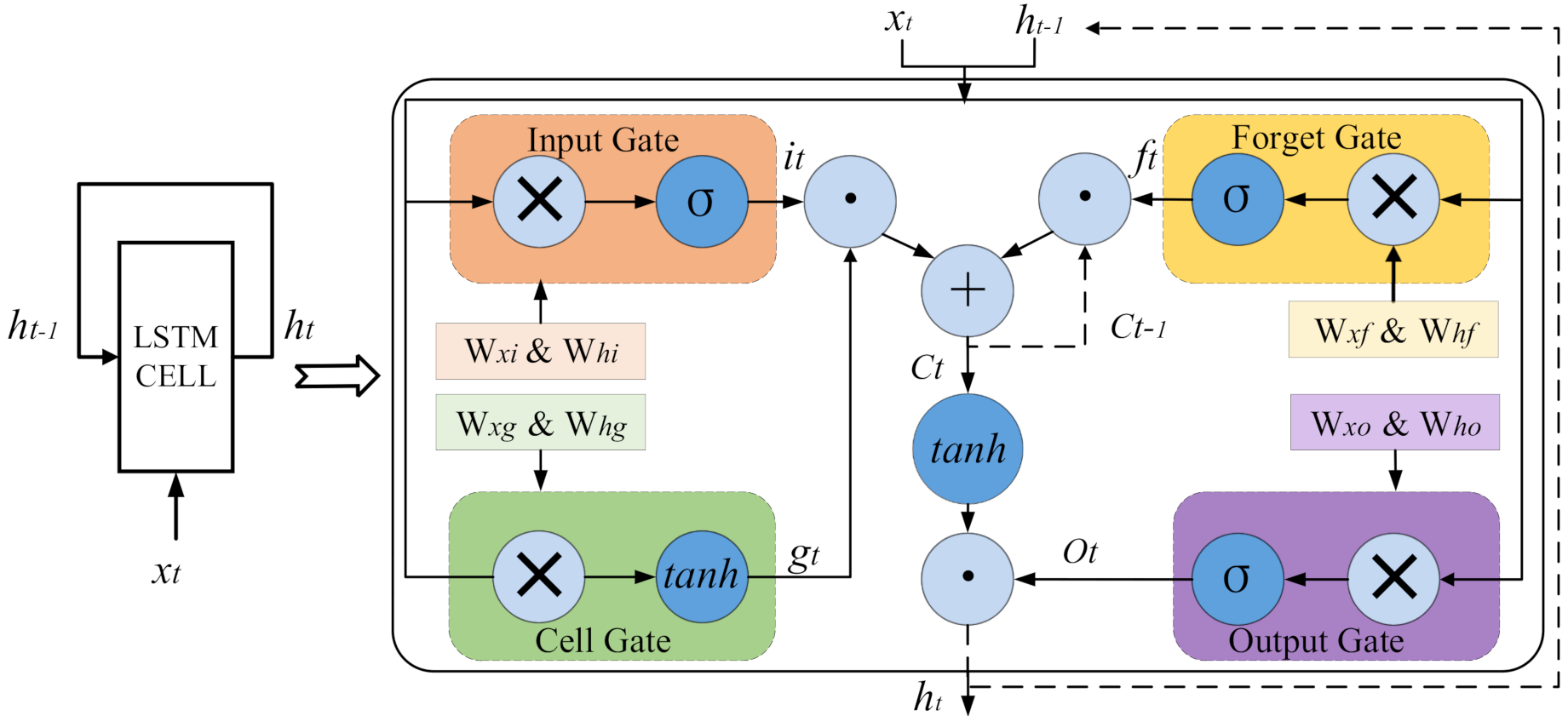

Electronics, Free Full-Text

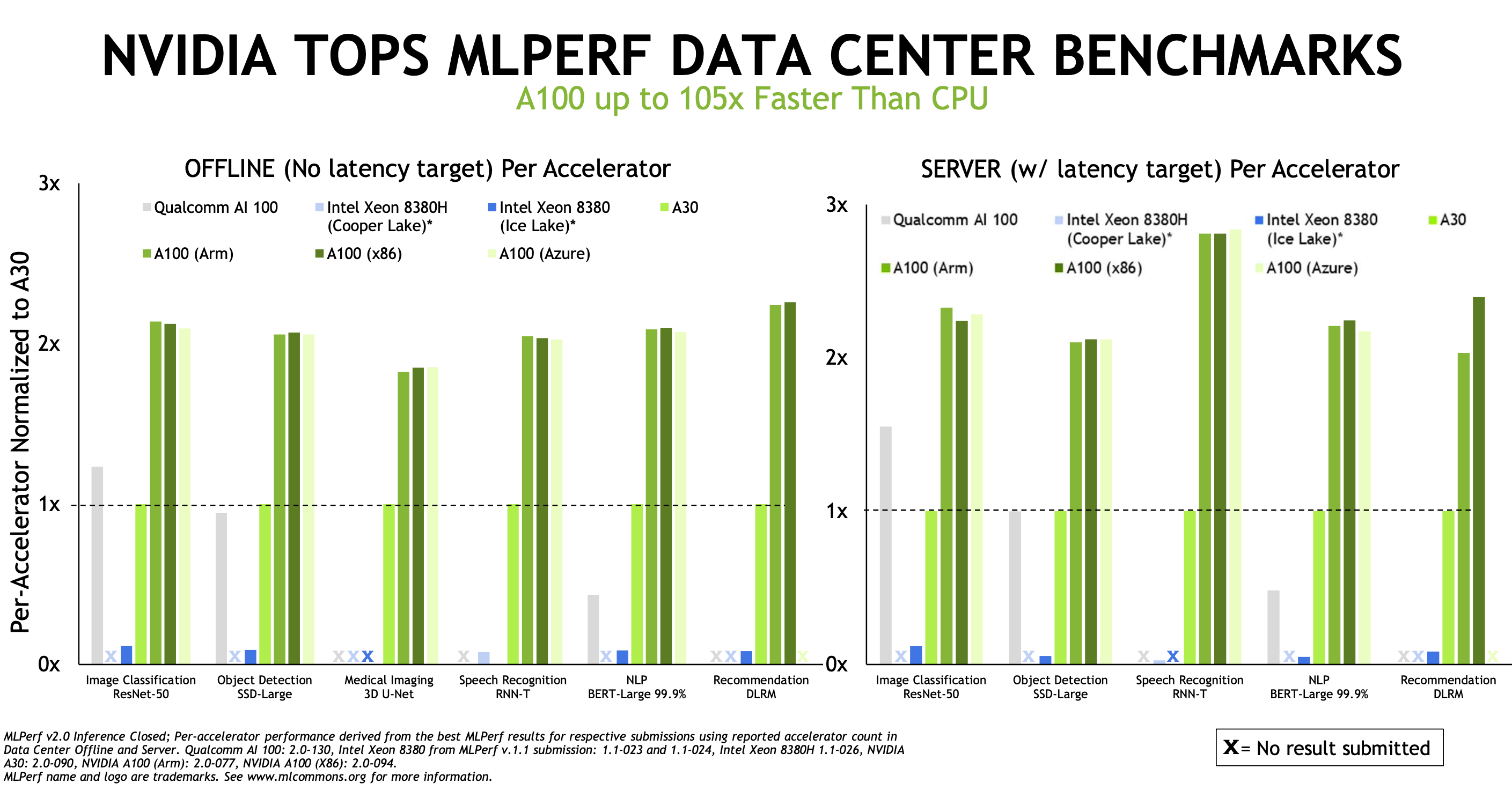

NVIDIA TensorRT-LLM Supercharges Large Language Model Inference on NVIDIA H100 GPUs

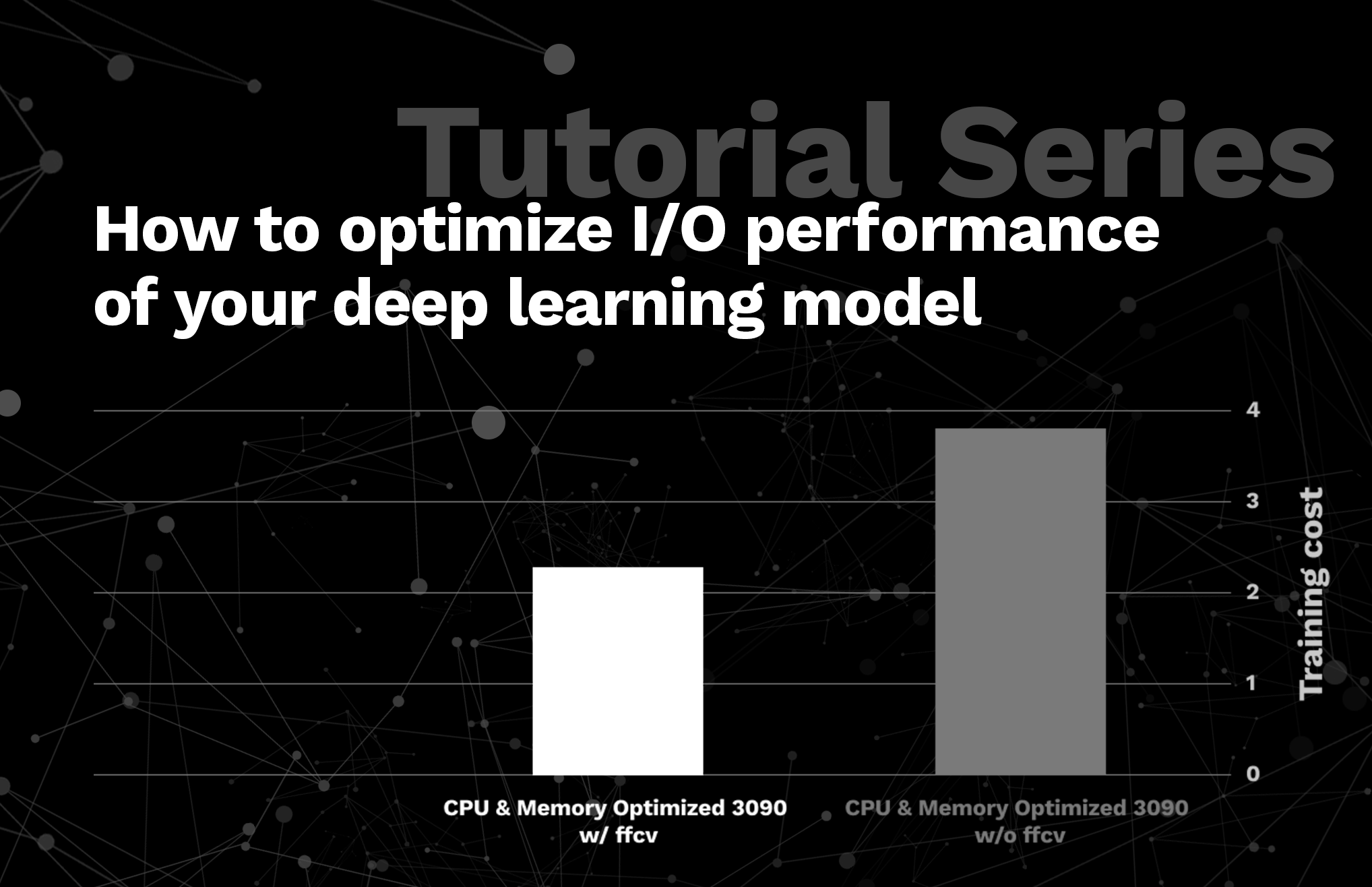

Tutorial Series: How to optimize I/O performance of your deep learning model

PDF) Performance of CPUs/GPUs for Deep Learning workloads

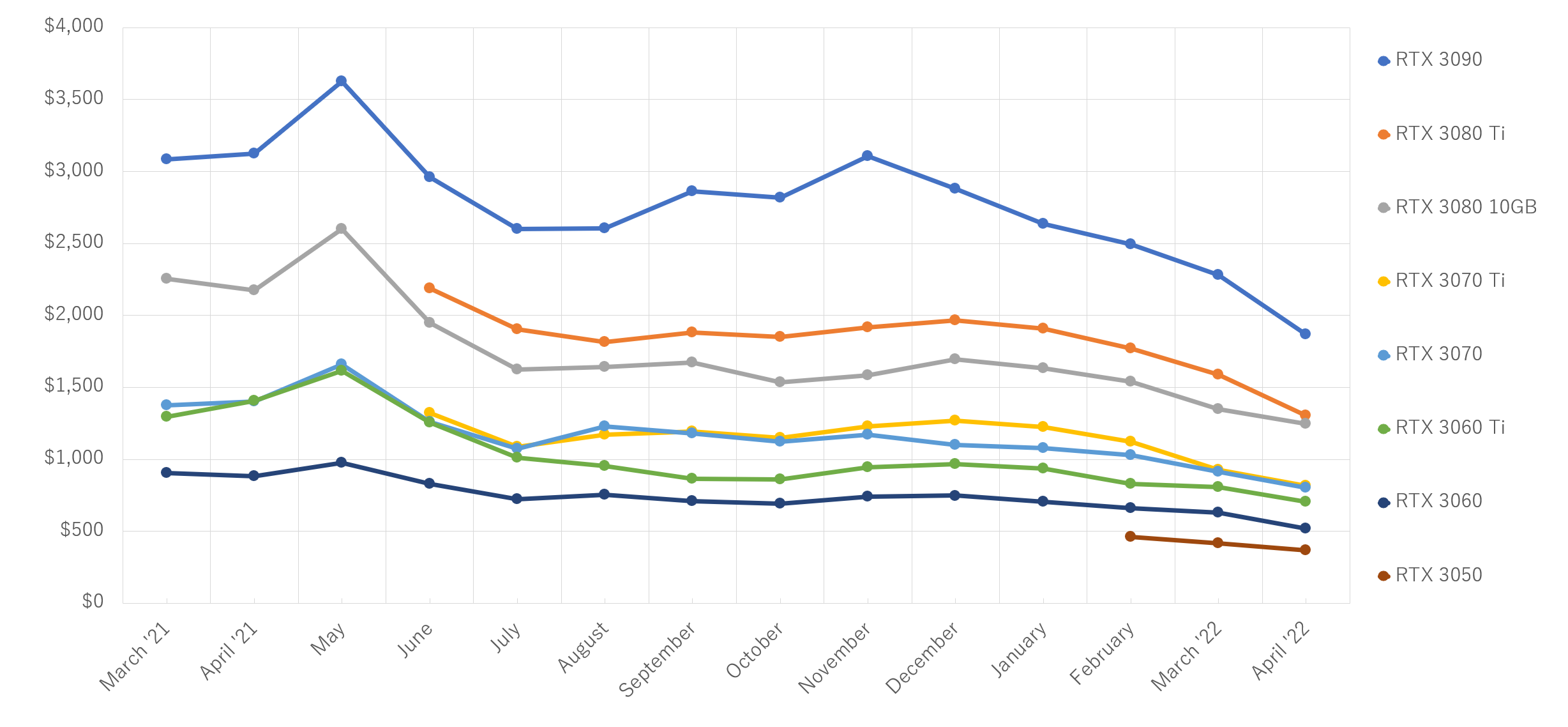

Hardware for Deep Learning. Part 3: GPU, by Grigory Sapunov

Developer Guide :: NVIDIA Deep Learning TensorRT Documentation

de

por adulto (o preço varia de acordo com o tamanho do grupo)