Visualizing the gradient descent method

Por um escritor misterioso

Descrição

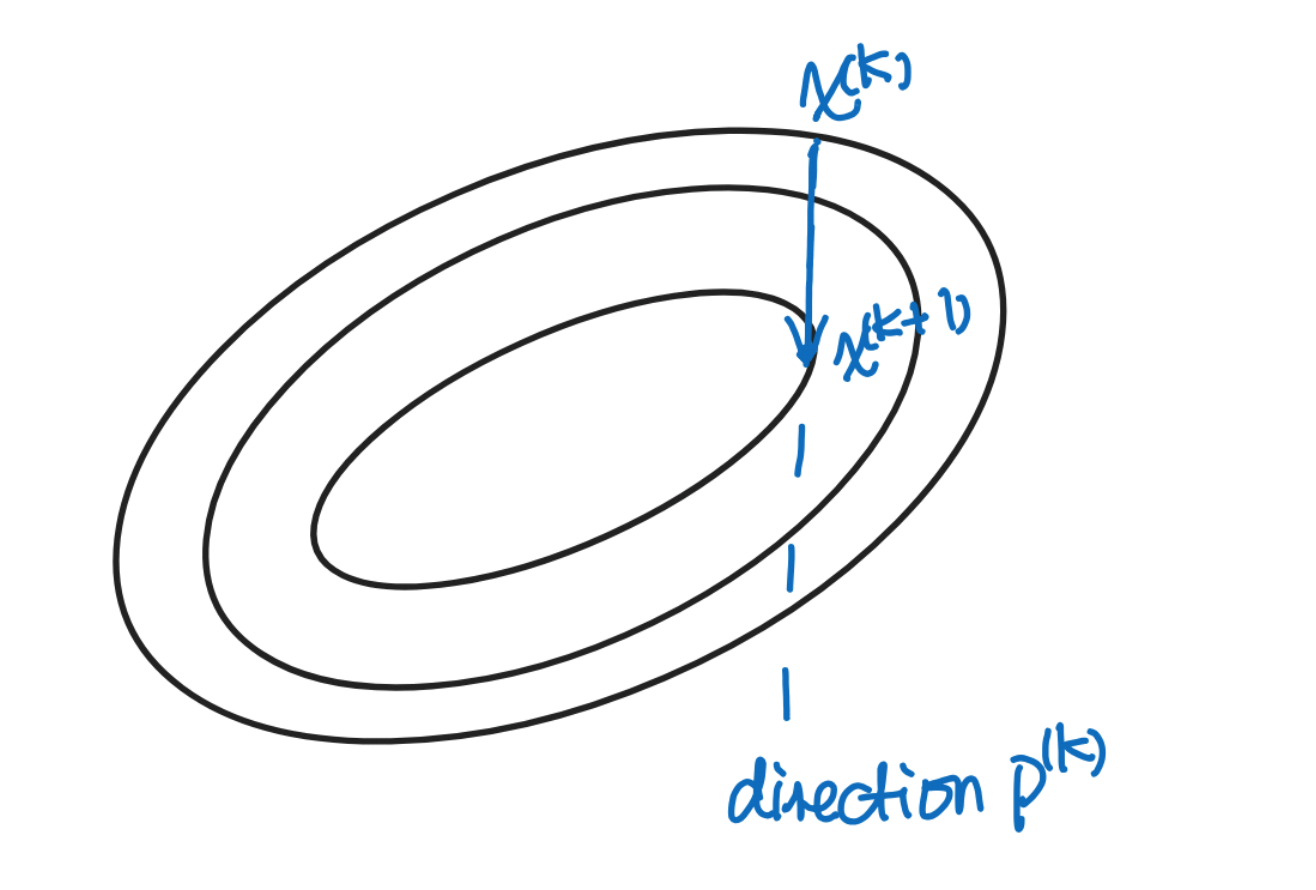

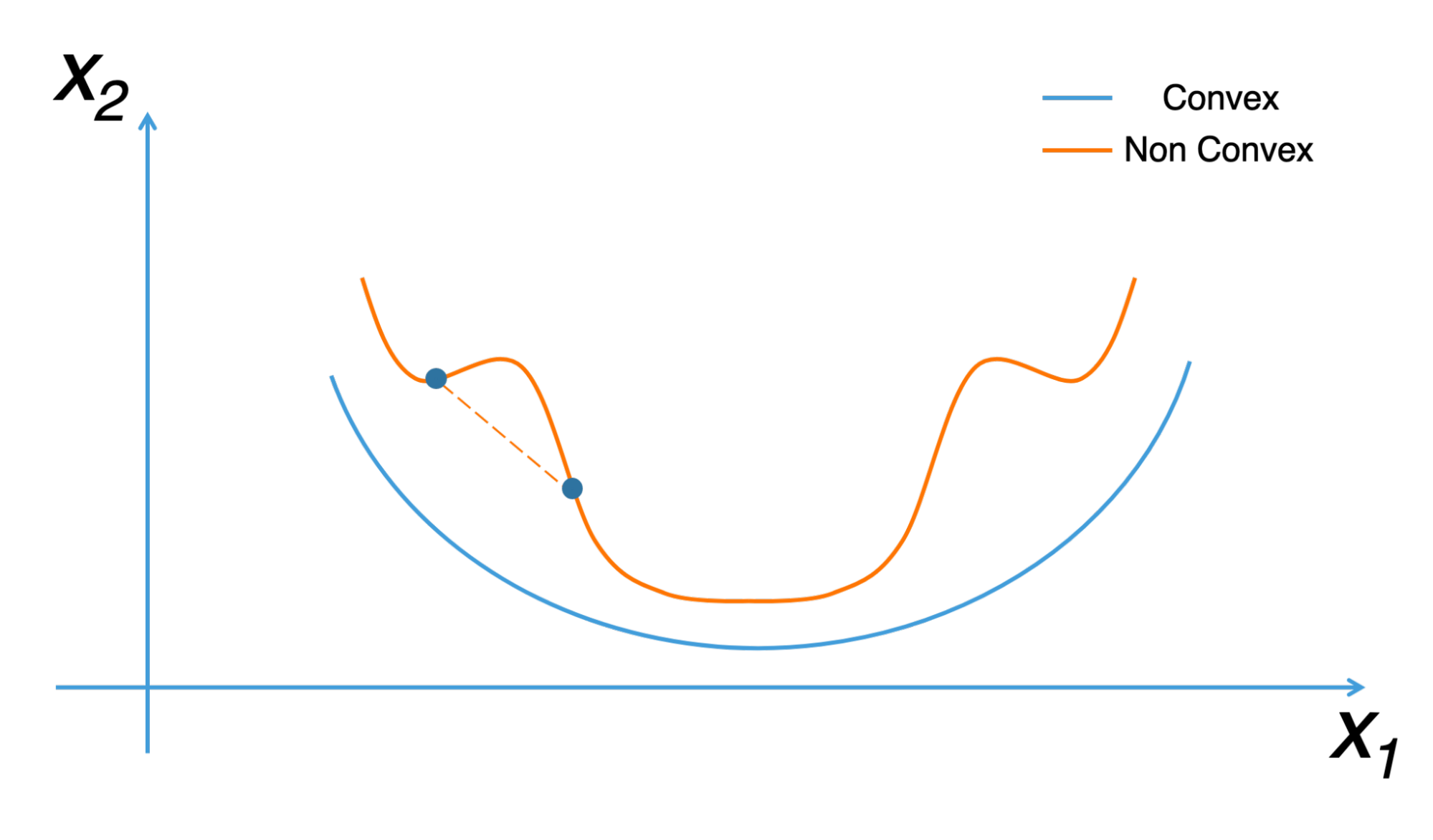

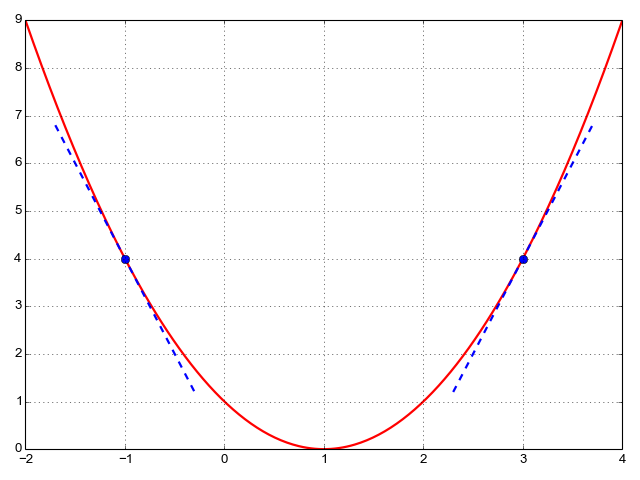

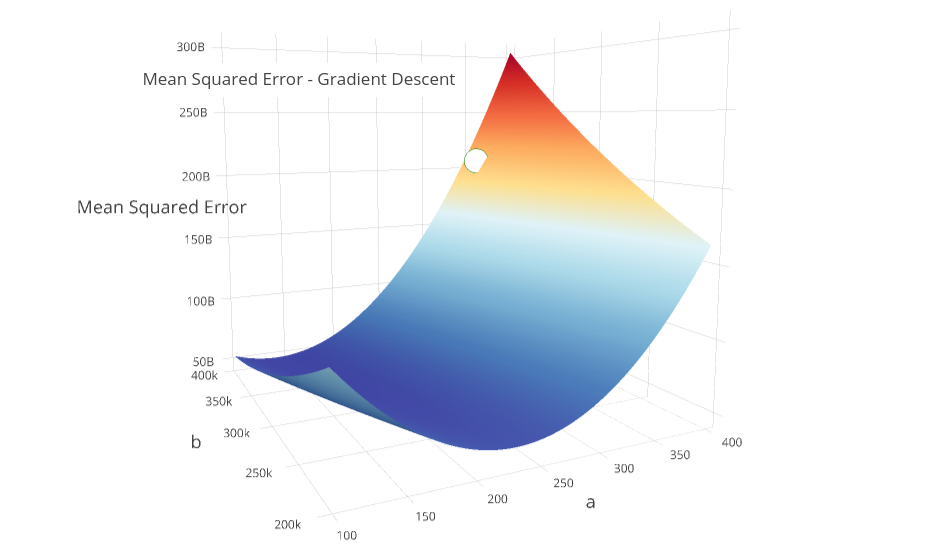

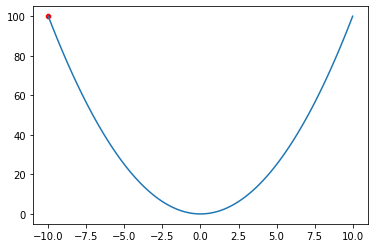

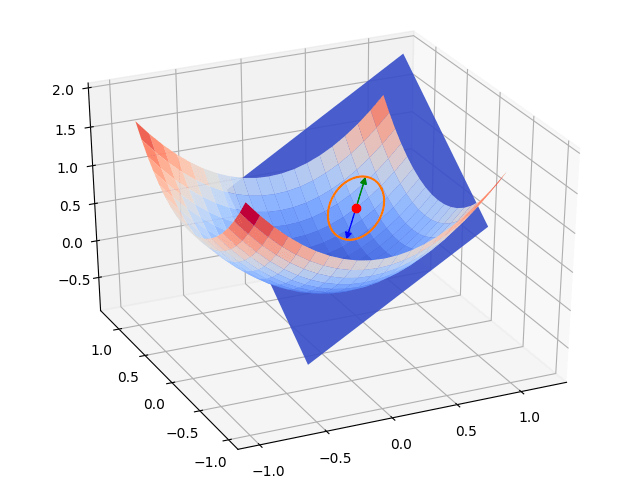

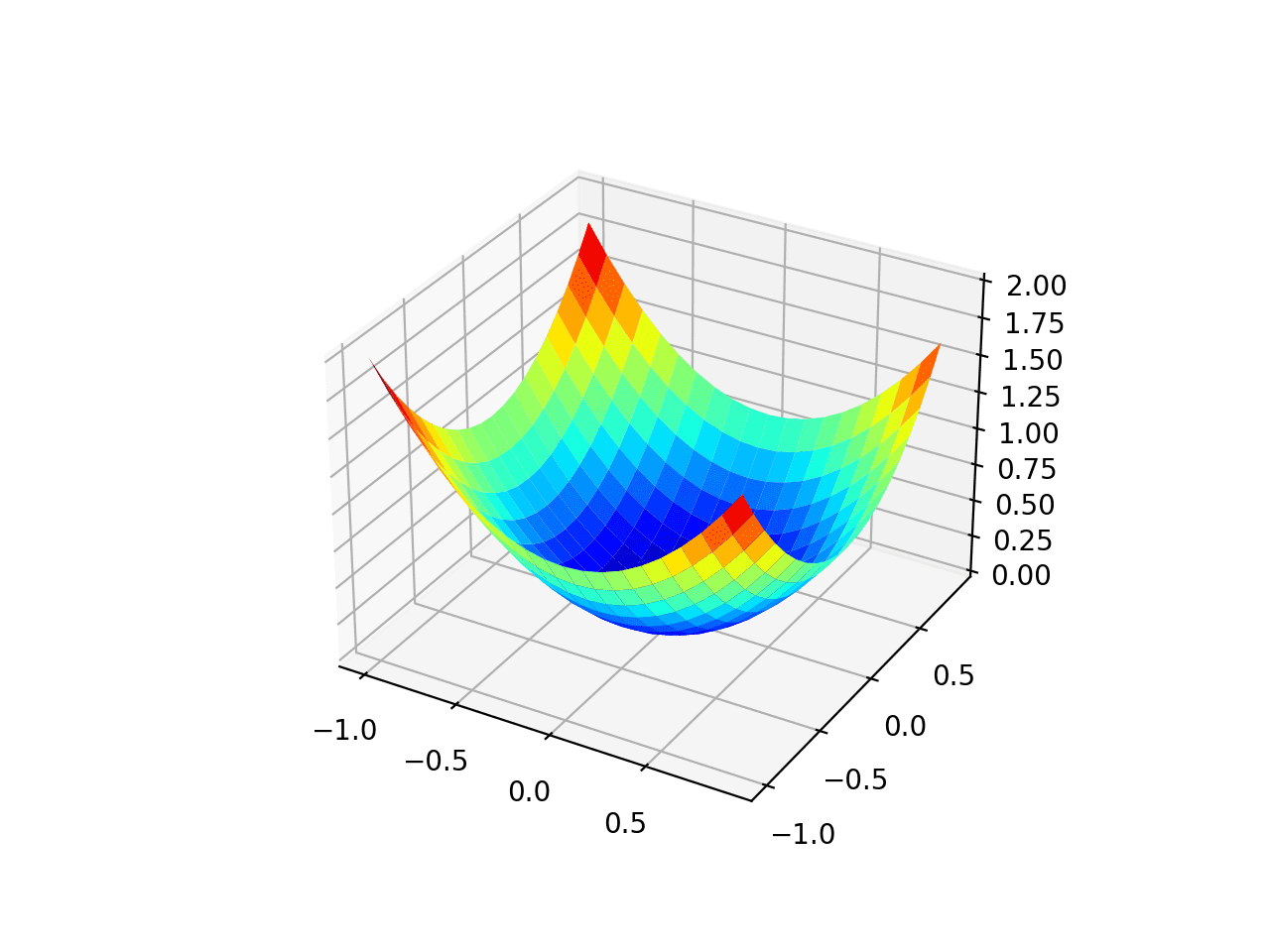

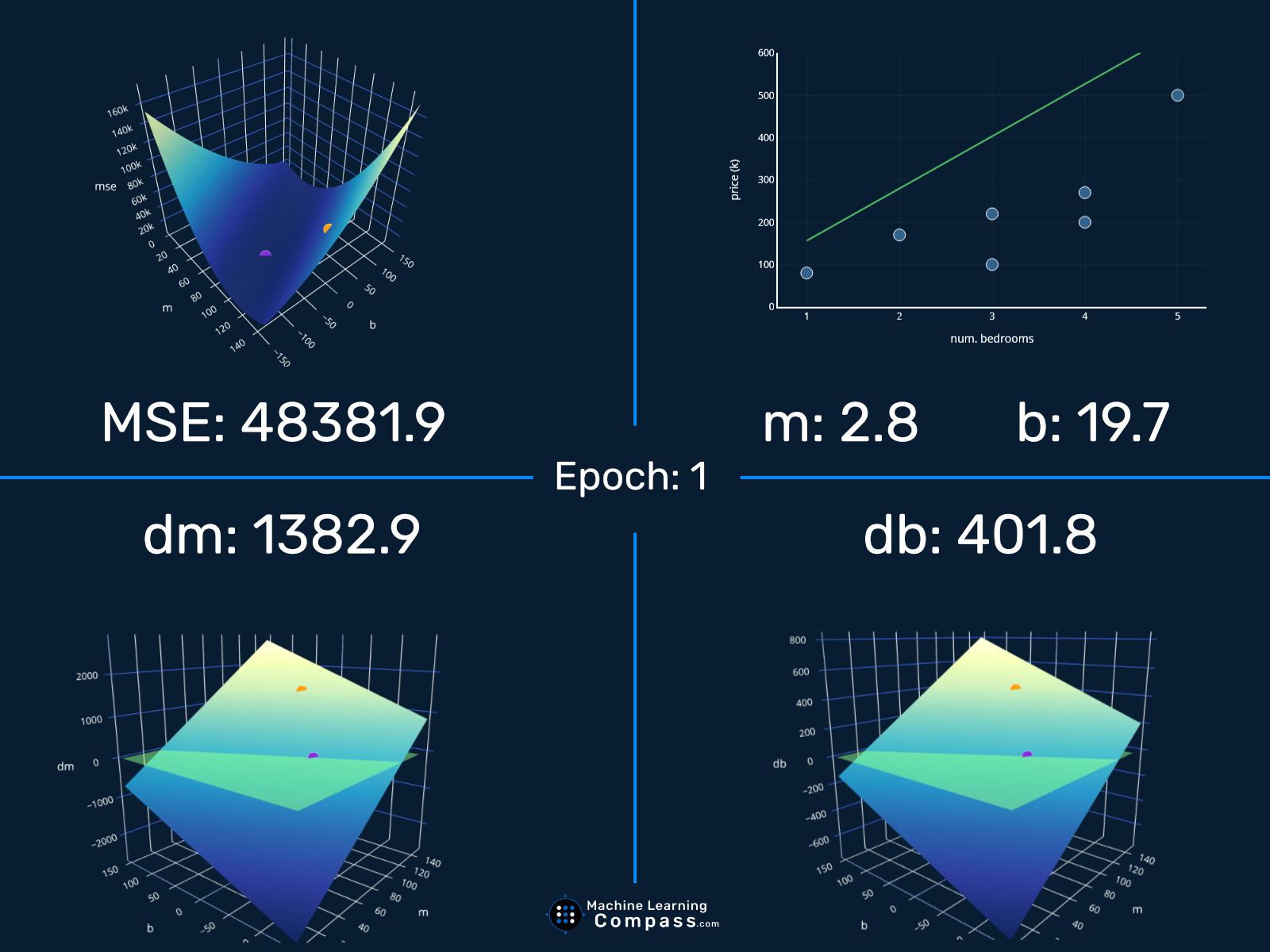

In the gradient descent method of optimization, a hypothesis function, $h_\boldsymbol{\theta}(x)$, is fitted to a data set, $(x^{(i)}, y^{(i)})$ ($i=1,2,\cdots,m$) by minimizing an associated cost function, $J(\boldsymbol{\theta})$ in terms of the parameters $\boldsymbol\theta = \theta_0, \theta_1, \cdots$. The cost function describes how closely the hypothesis fits the data for a given choice of $\boldsymbol \theta$.

Understanding gradient descent - Eli Bendersky's website

The Gradient: A Visual Descent

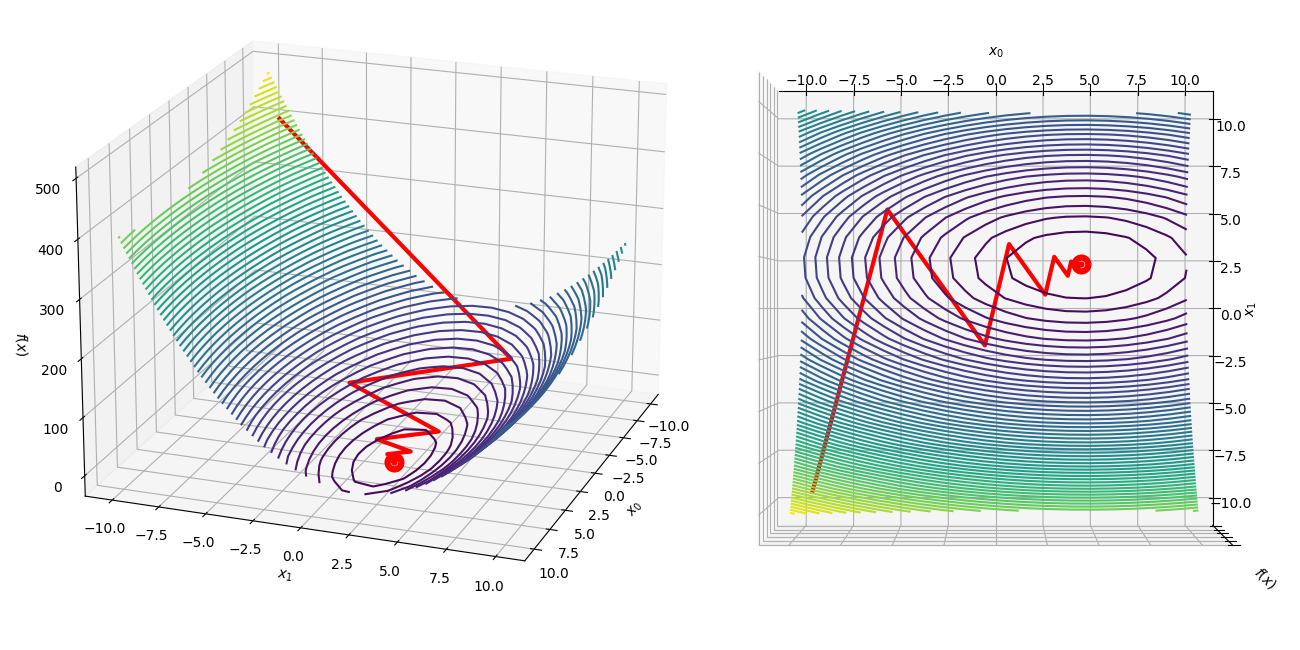

How to visualize Gradient Descent using Contour plot in Python

Variance Reduction Methods

Understanding Gradient Descent. Introduction, by Necati Demir

Reducing Loss: Gradient Descent, Machine Learning

How to Visualize Deep Learning Models

Simplistic Visualization on How Gradient Descent works

Gradient Descent from scratch and visualization

Gradient Descent With AdaGrad From Scratch

How Gradient Descent Algorithm Works - Dataaspirant

From Mystery to Mastery: How Gradient Descent is Reshaping Our World

Visualizing Newton's Method for Optimization II

Gradient Descent for Linear Regression Explained, Step by Step

de

por adulto (o preço varia de acordo com o tamanho do grupo)