8 Advanced parallelization - Deep Learning with JAX

Por um escritor misterioso

Descrição

Using easy-to-revise parallelism with xmap() · Compiling and automatically partitioning functions with pjit() · Using tensor sharding to achieve parallelization with XLA · Running code in multi-host configurations

Compiler Technologies in Deep Learning Co-Design: A Survey

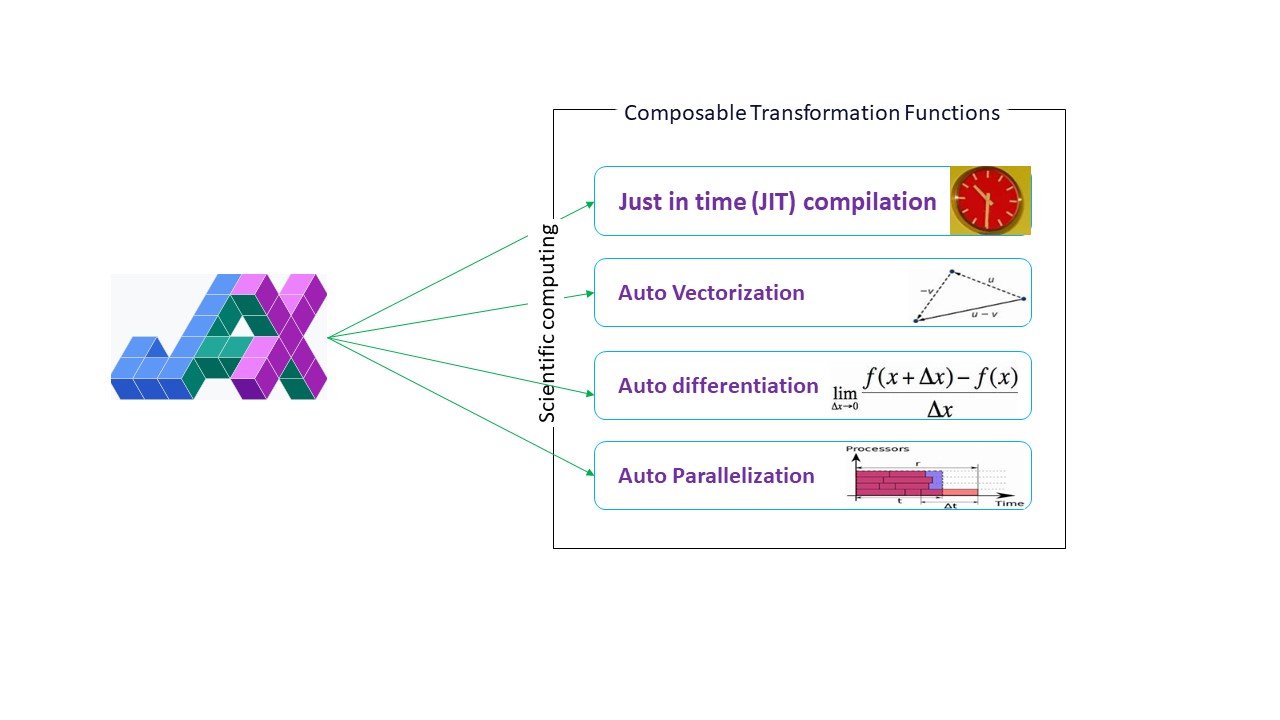

What is Google JAX? Everything You Need to Know - Geekflare

Dive into Deep Learning — Dive into Deep Learning 1.0.3 documentation

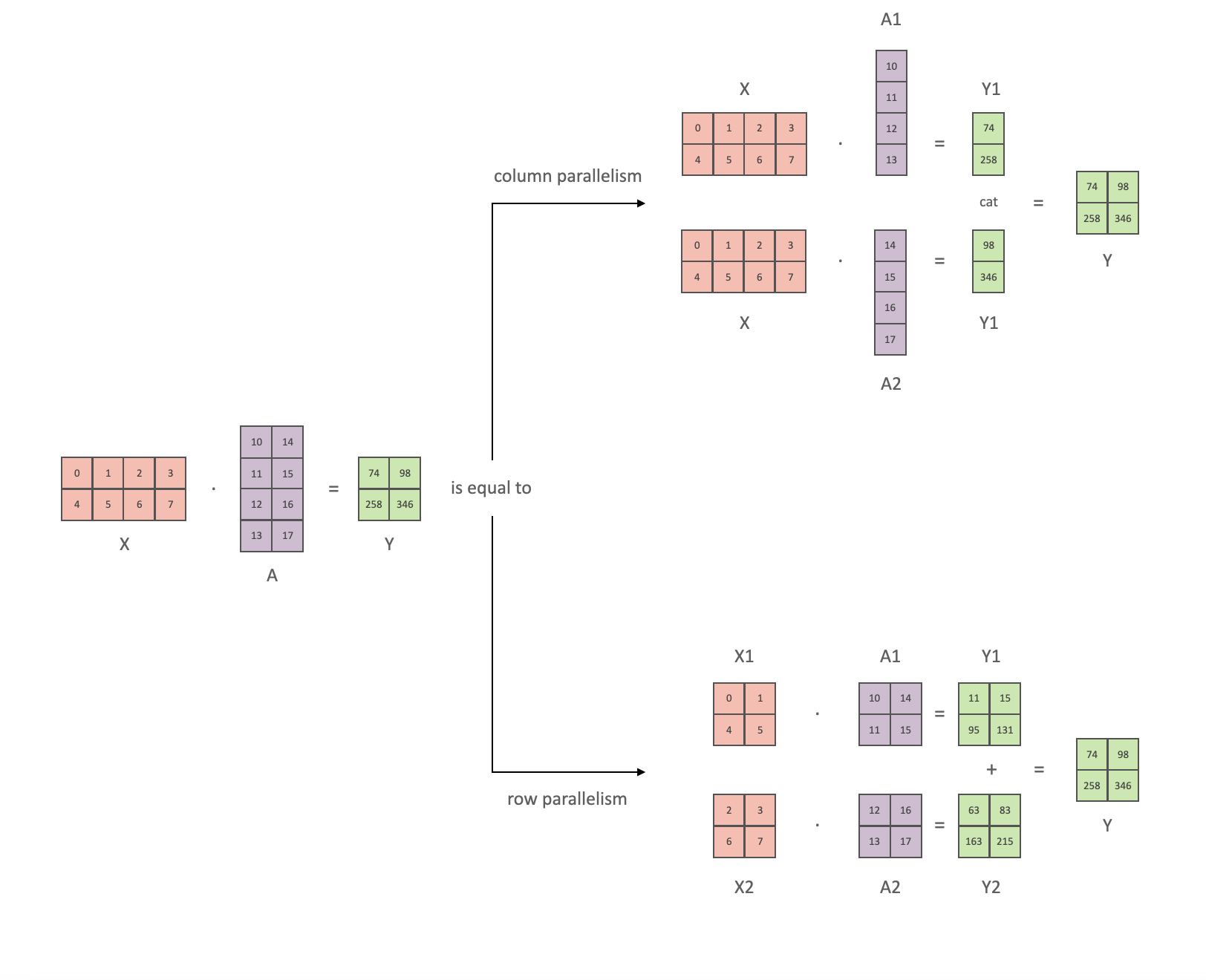

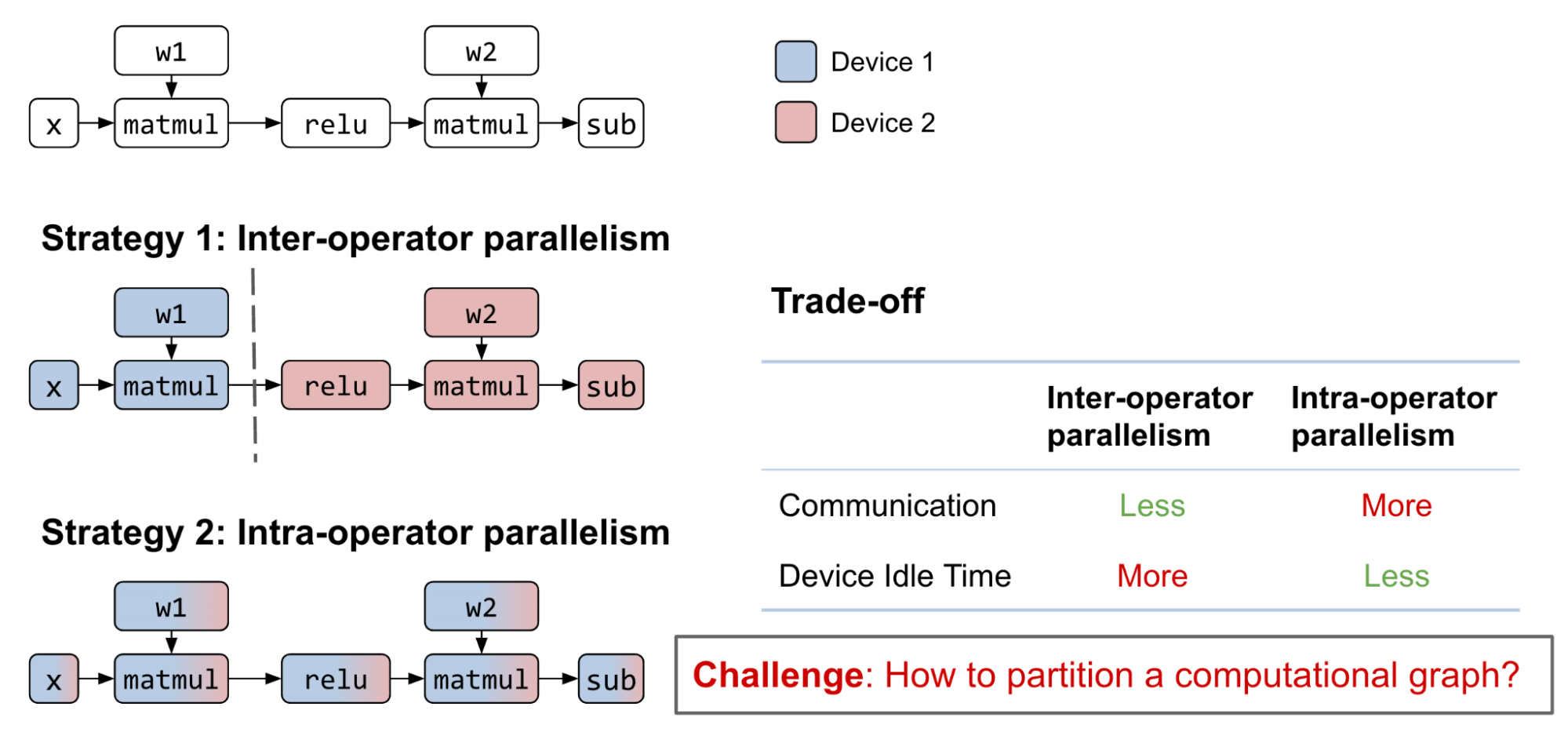

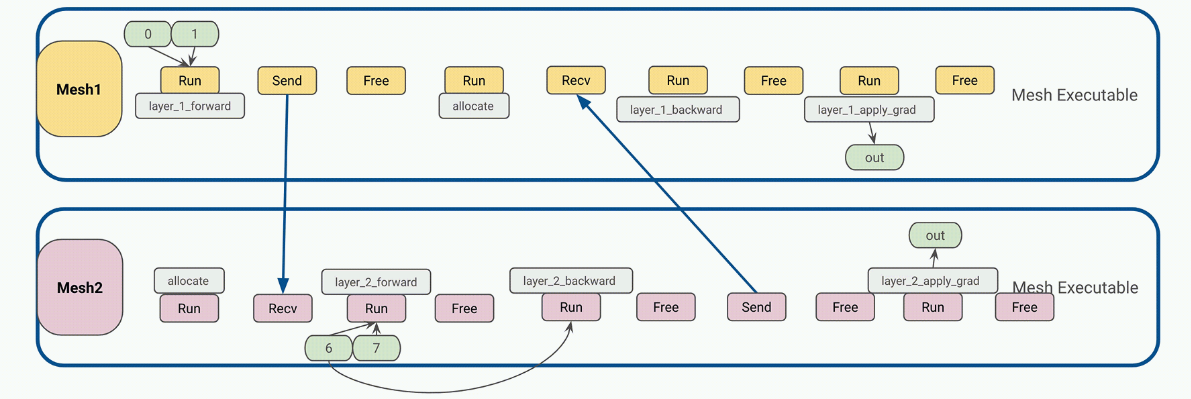

Model Parallelism

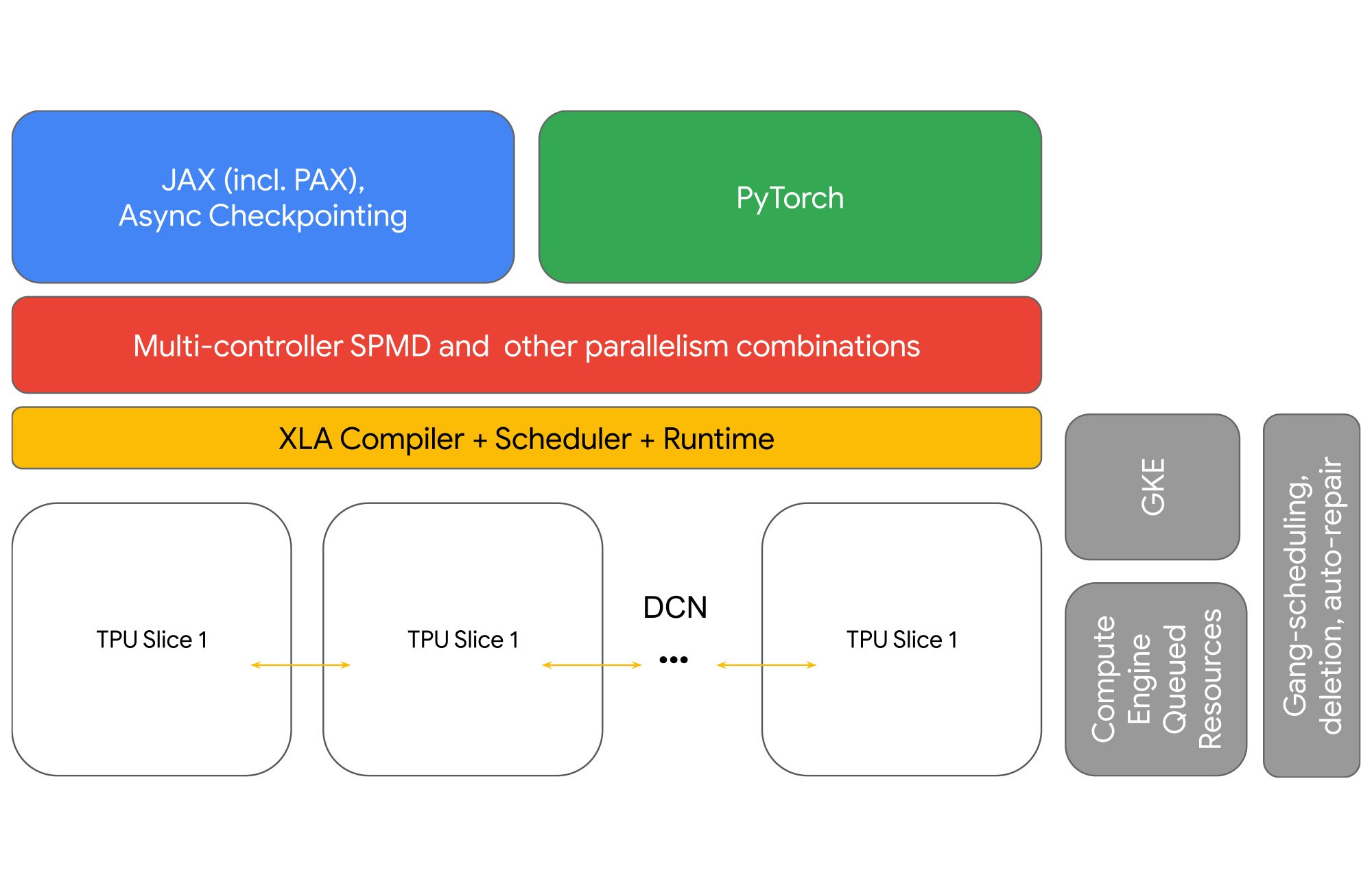

Using Cloud TPU Multislice to scale AI workloads

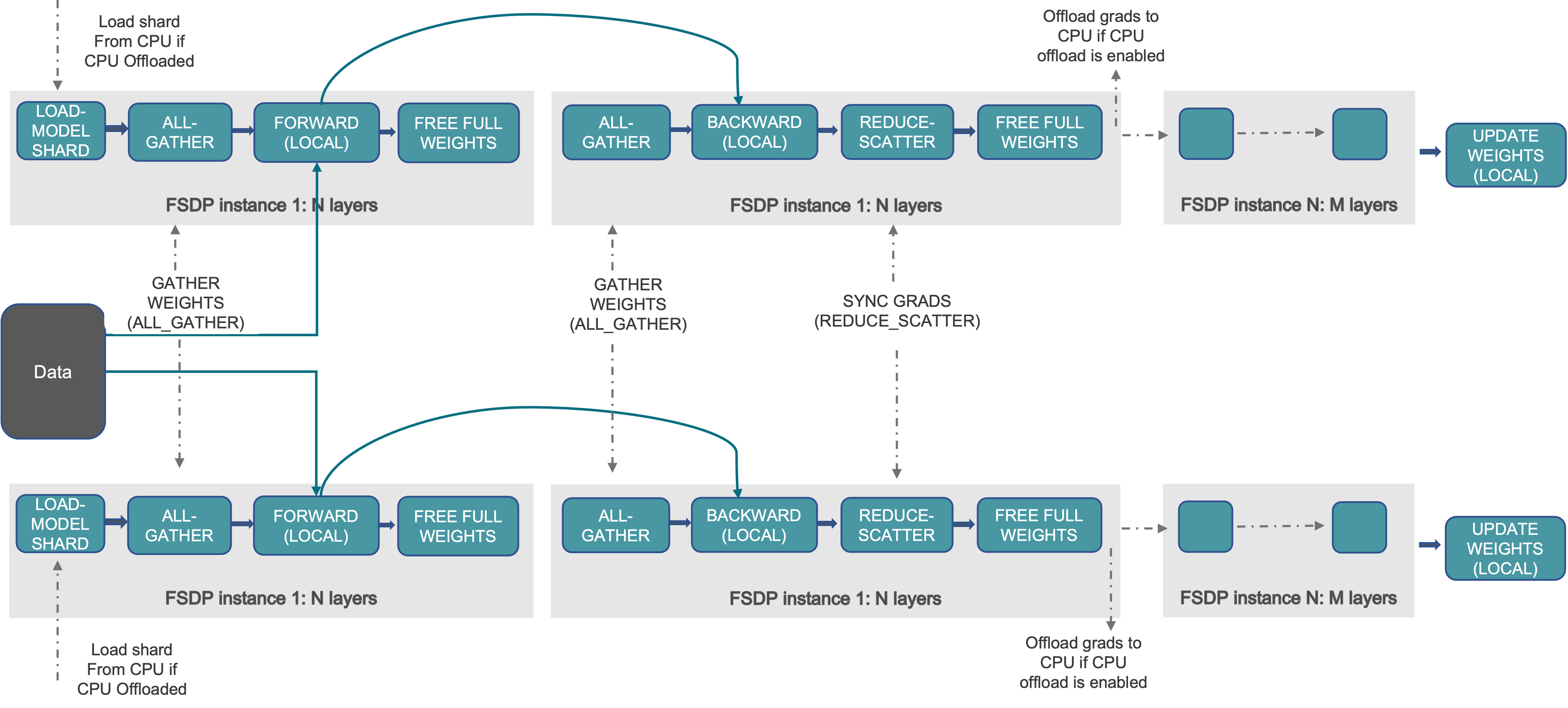

Introducing PyTorch Fully Sharded Data Parallel (FSDP) API

Efficiently Scale LLM Training Across a Large GPU Cluster with

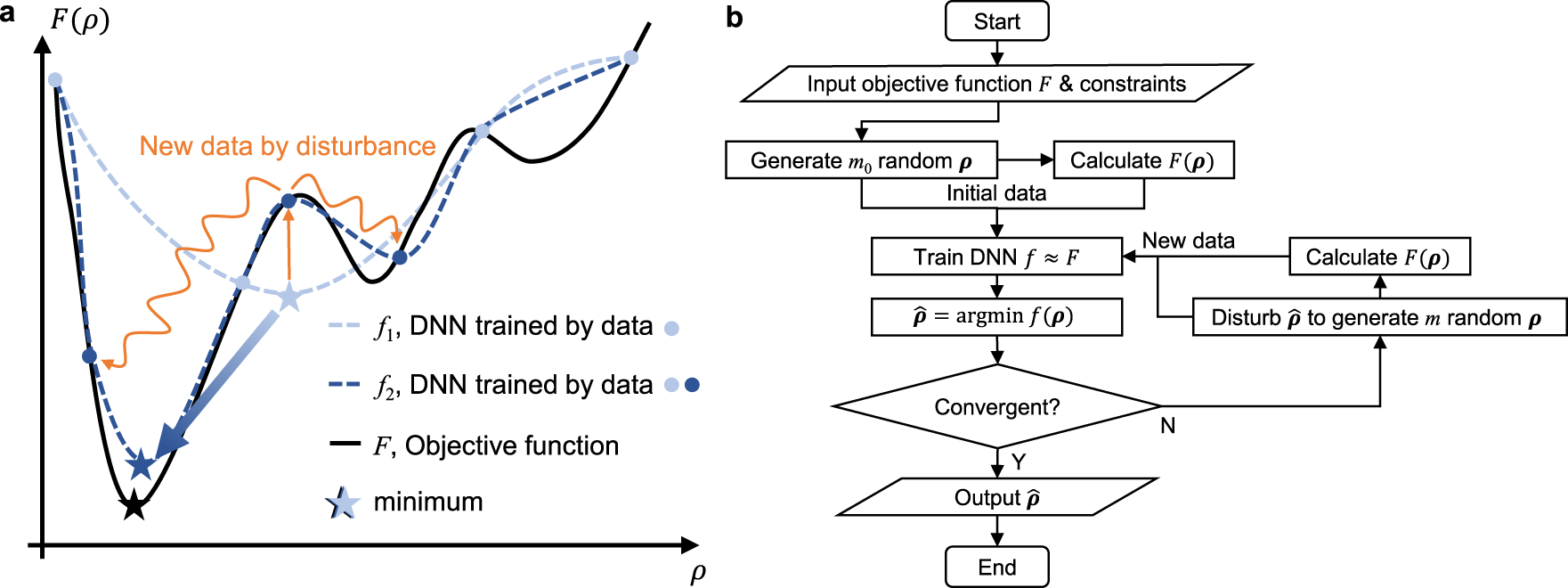

Self-directed online machine learning for topology optimization

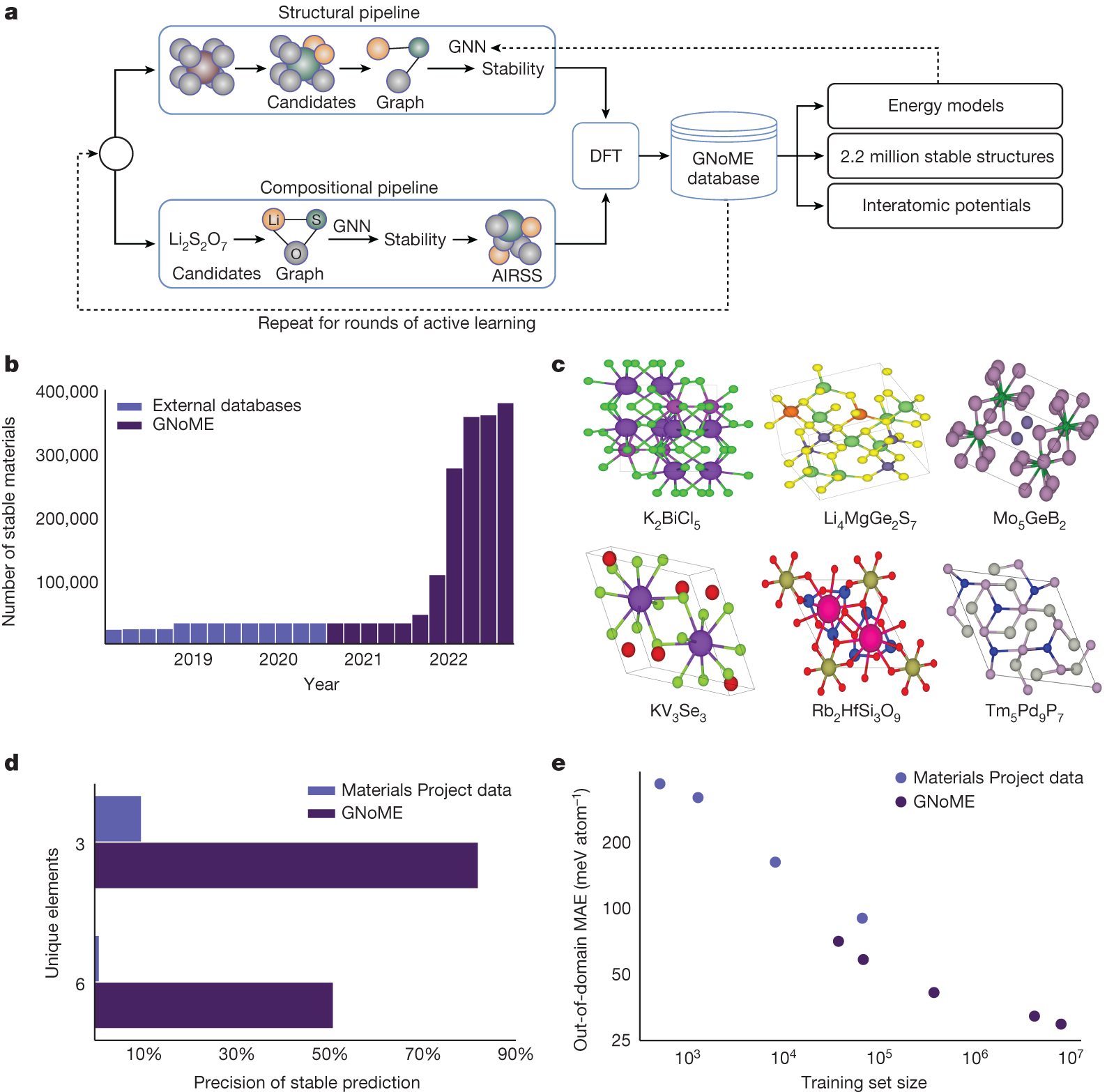

Scaling deep learning for materials discovery

Efficiently Scale LLM Training Across a Large GPU Cluster with

de

por adulto (o preço varia de acordo com o tamanho do grupo)

.png)